This article is a sequel to musings I have started here. Let’s take one more step in exploring what could be next: from Self-Driving Cars to Autonomous Barbers

Update: after publishing a couple of readers reached out to me and suggested that I am making up the words. For the sake of those new to the field:

- ANI (Artificial Narrow Intelligence) – is a type of AI that is designed to perform a specific task or set of tasks, such as Image recognition, NLP, Predictive modeling, and Expert systems. In the specific field you might see acronym SLM (Small language models), WeakAI, etc.

- AGI (Artificial General Intelligence) – is a type of AI that is designed to perform any intellectual task that a human can. AGI is capable of general reasoning, learning, and problem-solving, and is not limited to a specific domain. AGI is often referred to as “strong AI” or “human-level AI.” Which is pure fiction as we speak.

Self-Driving Cars: The Pioneers of Physical ANI

The most prominent example of physical ANI in action today is the self-driving car. These vehicles, equipped with sensors and AI-driven algorithms, navigate the complexities of road systems, traffic, and to a much lesser degree the unpredictable human behavior. With drastically various degrees of success, companies like Tesla, Waymo, and others have pioneered this field, turning what was once a staple of science fiction into today’s commute with arguably lesser causalities. Self-driving cars exemplify how AI can take over tasks that require constant attentiveness and decision-making, freeing humans to focus on less mundane and creative activities during their drives like drinking beer and sending pictures to Instagram.

Robotic Cleaners: The Unsung Heroes of Chores

Another area where ANI has made an undeniable mark is in the automation of household chores. Robotic vacuum cleaners, like the Roomba, and their cousins that mop and even clean windows, are perfect examples of how AI can be tailored to perform specific tasks—keeping our living spaces clean without any of the grumbles usually associated with household chores. These devices navigate around furniture, adapt to different surfaces, and dock themselves for charging, all without any human intervention.

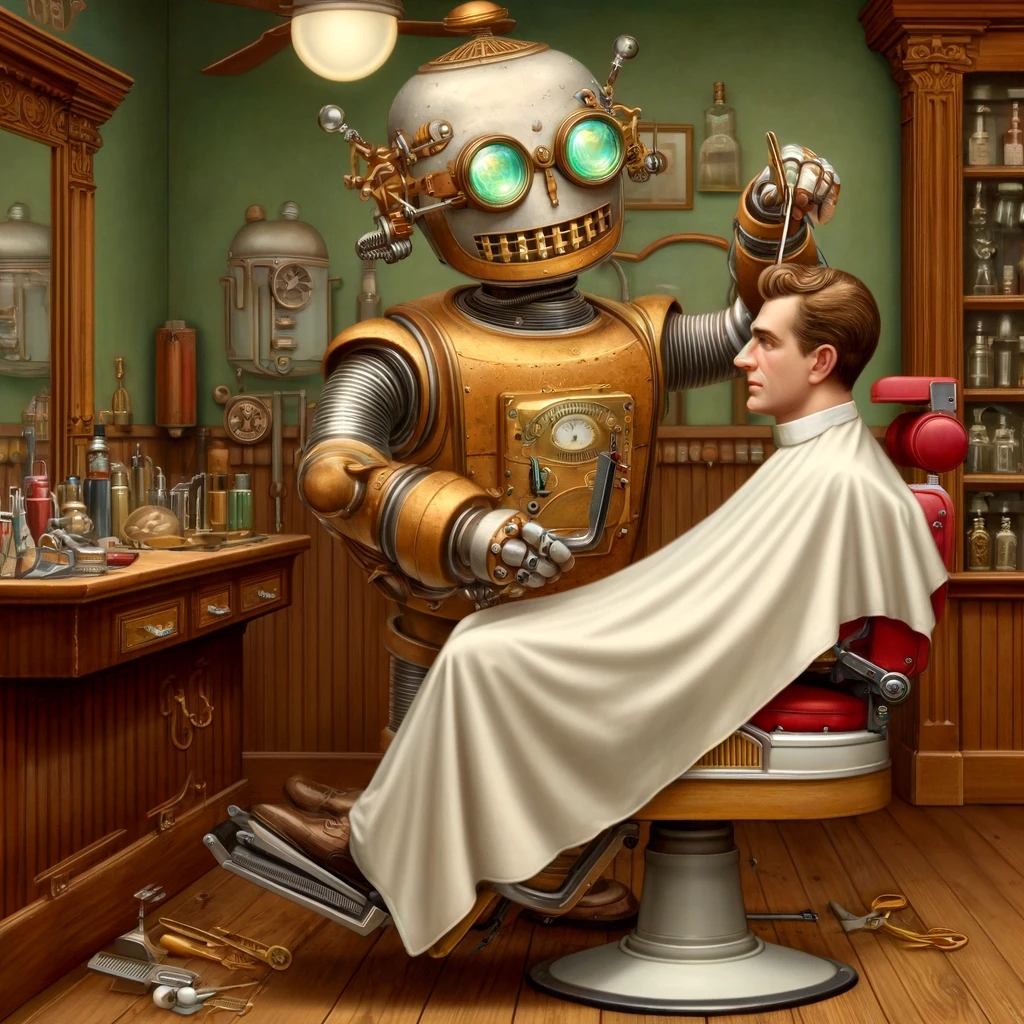

The Autonomous Barber: A Snip Too Far?

Imagine walking into a barber shop where an AI-powered machine greets you. Instead of a warm smile and a human touch, you’re met with a set of robotic arms equipped with scissors, straight razors, and combs. In the spirit of efficiency, this barber machine is programmed to perfect one hairstyle or provide a flawless shaving. Every customer, regardless of personal style or the shape of their skull, walks out with the same rounded haircut and likely anew and universal form of their heads — a terrifying thought, perhaps. This fictitious example highlights the limitations and absurdities we might face if we push ANI into roles that require a personal touch or aesthetic judgment.

And certainly, I don’t expect to walk into a corporate office one day and see a piece of plastic behind the desk pretending to be a human HR specialist or a receptionist. My good friends at loomhr.ai are already doing a great job with their pure software approach with hireable AI.

Advancements in Practical Robotics

Beyond these everyday interactions, ANI is also transforming more specialized fields. In agriculture, drones and robotic systems monitor crop health, distribute fertilizers, and even harvest fruits without tiring. In manufacturing, AI-powered robots handle tasks that are dangerous or ergonomically challenging for humans, such as assembling heavy machinery or working with hazardous materials. These robots are programmed for specific tasks, enhancing safety and efficiency but always under human oversight to ensure that the nuances of quality and craftsmanship are maintained.

Clearly, in places like California, one can expect to see more AI-based cashiers at In-and-Out as the minimum wage climbs north of $20/hour, making human employees economically unsuitable.

A Grounded Future

As we integrate more AI into our lives, it’s essential to stay grounded in realistic expectations and practical applications of ANI. While it’s fun to speculate about AI barbers and humanoid robots, the true power of ANI lies in its ability to perform dedicated tasks that enhance our productivity and safety. By focusing on feasible and practical applications, we can harness the benefits of ANI without falling into the trap of over-hyped futuristic fantasies.

Indeed, the advanced technology behind the fashionable term “AI” is here, and it’s more practical and less hair-raising than you might expect. As we continue to innovate, let’s ensure that AI remains a tool that complements and enhances human abilities, rather than replacing the personal touch that makes our experiences genuinely human. However, be careful not to succumb to the wishful thinking that this technology will completely change humankind or allow all of us to compose poetry or create oil and watercolor paintings on the beach instead of working for a living.